Adaptive fine-tuning as part of the standard transfer learning setting. Importantly, the model is fine-tuned with the pre-training objective, so adaptive fine-tuning only requires unlabelled data. Specifically, adaptive fine-tuning involves fine-tuning the model on additional data prior to task-specific fine-tuning, which can be seen below. Adaptive fine-tuning is a way to bridge such a shift in distribution by fine-tuning the model on data that is closer to the distribution of the target data. Adaptive fine-tuningĮven though pre-trained language models are more robust in terms of out-of-distribution generalisation than previous models ( Hendrycks et al., 2020), they are still poorly equipped to deal with data that is substantially different from the one they have been pre-trained on. Overview of fine-tuning methods discussed in this post. In particular, I will highlight the most recent advances that have shaped or are likely to change the way we fine-tune language models, which can be seen below. Consequently, fine-tuning is the main focus of this post. Fine-tuning is more important for the practical usage of such models as individual pre-trained models are downloaded-and fine-tuned-millions of times (see the Hugging Face models repository). While pre-training is compute-intensive, fine-tuning can be done comparatively inexpensively. The standard pre-training-fine-tuning setting (adapted from (Ruder et al., 2019)) The pre-trained model is then fine-tuned on labelled data of a downstream task using a standard cross-entropy loss.

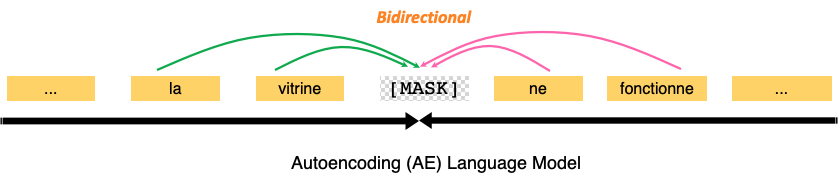

In the standard transfer learning setup (see below see this post for a general overview), a model is first pre-trained on large amounts of unlabelled data using a language modelling loss such as masked language modelling (MLM Devlin et al., 2019). The limitations of this zero-shot setting (see this section), however, make it likely that in order to achieve the best performance or stay reasonably efficient, fine-tuning will continue to be the modus operandi when using large pre-trained LMs in practice. Recent models are so large in fact that they can achieve reasonable performance without any parameter updates ( Brown et al., 2020). The empirical success of these methods has led to the development of ever larger models ( Devlin et al., 2019 Raffel et al., 2020). Over the last three years ( Ruder, 2018), fine-tuning ( Howard & Ruder, 2018) has superseded the use of feature extraction of pre-trained embeddings ( Peters et al., 2018) while pre-trained language models are favoured over models trained on translation ( McCann et al., 2018), natural language inference ( Conneau et al., 2017), and other tasks due to their increased sample efficiency and performance ( Zhang and Bowman, 2018). In addition to the music distribution expertise, finetunes is also your perfect partner for video monetization (YouTube).Fine-tuning a pre-trained language model (LM) has become the de facto standard for doing transfer learning in natural language processing. Tools like the Labeladmin or the valuable webbased services of PromoTool, Sales Master or Content Hub are supporting our partner's daily labeldriven work. Additionally, finetunes handles all its technical development and operations in-house, giving the company the ability to react fast, innovate and provide tailor made solutions for labels and distributors. finetunes’ global network of satellite offices in London, Paris and San Diego helps secure strategic, focused and truly co-ordinated international retail marketing for its releases.

A leading distributor in the field of electronic music, finetunes also represents labels from across the musical spectrum: from jazz to reggae, pop to world music. The Hamburg-based distribution and digital solutions company distributes a high quality and focused roster of international independent record labels. Finetunes has been creating opportunities for independent labels in the digital music markets for more than ten years.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed